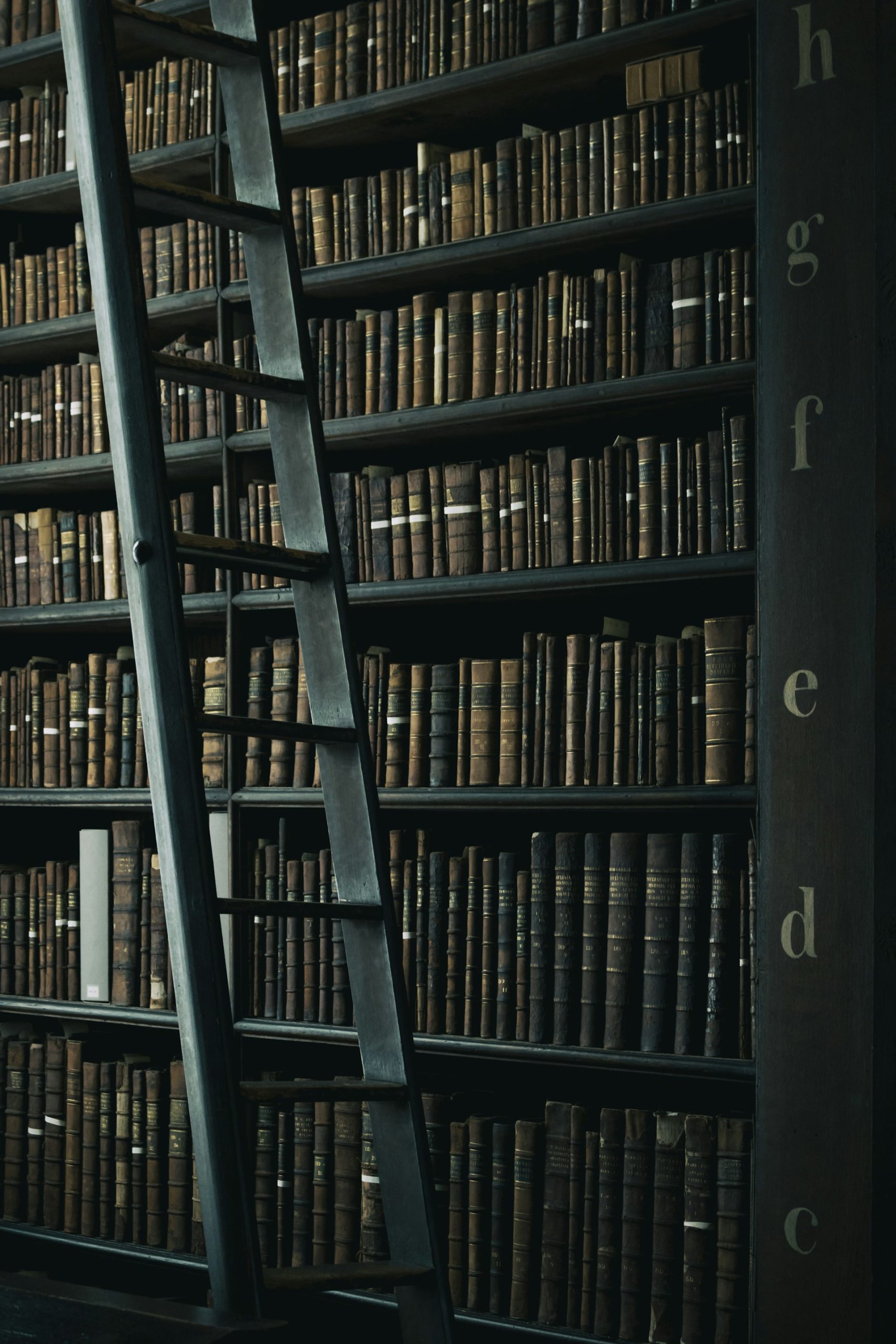

Imagine someone who has read every university library in Switzerland. They can answer almost any general knowledge question. But if you ask them what happened at your place last week, or what’s in your internal documents, they have no idea. They weren’t there, and no one showed them.

That’s exactly the problem with ChatGPT or Claude: they were trained on billions of texts up to a certain date, and that’s it. They don’t know your company, your documentation, your internal PDFs. To understand how RAG works, you first have to accept this limitation.

RAG gives the model access to your personal library

RAG stands for Retrieval-Augmented Generation. In practice:

- You ask a question

- The system searches your documents for the most relevant passages

- It adds them to the context sent to the model

- The model answers based on those passages

It’s as if, before answering, your genius friend quickly grabbed the three most useful pages from your library and skimmed them first.

Why not just hand it all the documents at once?

Because models have a working memory limit, called the context window. You can’t feed them 10,000 pages in one go. RAG solves this by selecting only what’s relevant to this specific question.

A concrete example

A local council sets up a chatbot. Without RAG, the model makes up answers about bin collection times. With RAG, it first searches the official schedule PDF and cites the source. No more hallucinations on local facts.

This is exactly how RAG works in production: what we build at Liip with LiipGPT is a RAG layer on top of document sources specific to each client.

In summary

| LLM alone | LLM + RAG | |

|---|---|---|

| General knowledge | ✅ | ✅ |

| Your internal documents | ❌ | ✅ |

| Recent data | ❌ | ✅ (if indexed) |

| Risk of hallucination on facts | high | reduced |

Understanding how RAG works means understanding why LLMs become genuinely useful in a real business context. The quality of the search and the source documents remains the deciding factor.